NVIDIA RTX - Excellent Performance but what about the Value?

Author: Dennis GarciaIntroduction

On September 19th NVIDIA lifted the embargo on the new RTX 20-Series GPU which is based on the new Turing architecture. The internet was feverish with rumors indicated that the new GPU would be super powerful and would decimate all. For the most part the reviews were correct with major performance increases across the board.

For instance Kingpin from EVGA posted several 3Dmark world records using the RTX 2080 Ti and is a prelude to what EVGA will have in store when it comes to extreme overclocking. To put the new records in perspective Kingpin posted a score of 18925 with a clock speed of 2415Mhz using LN2 which is over 200points above the previous world record held Splave using a Titan V on a waterchiller. Both cards were heavily overclocked however the effort to attain the 2080 Ti record pushed the entire subsystem quite a bit harder.

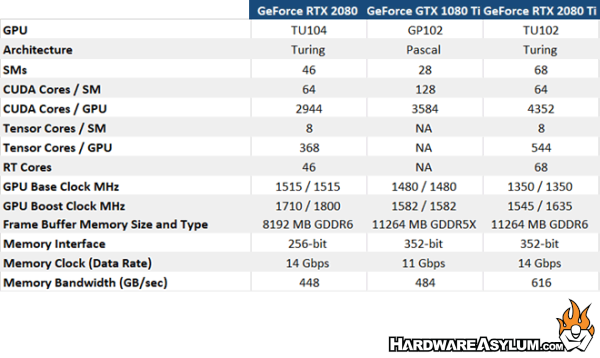

Raw performance aside the RTX 2080 Ti (and the entire 20-Series) brings with it a number of enhancements including:

- more CUDA cores

- Tensor cores

- Ray Tracing cores

- GDDR6

- 384-bit memory bus

- 616 GB/sec of memory bandwidth

- 12GB frame buffer

- 1350Mhz Core Clock with a 200Mhz Boost

Contrast that with the current generation GTX 1080 Ti that features:

- GDDR5x, 352-bit memory bus

- 484 GB/sec of memory bandwidth

- 12GB frame buffer

- 1480Mhz Core Clock with a 100Mhz Boost

Major architecture differences aside looking at the specifications it is pretty clear that most of the performance increases are from the additional cores and better memory bandwidth. Everything else has been added just to support the new technologies like deep learning, DLSS and Ray Tracing.

With that being said, this article isn’t so much about quoting performance specs (yes I can read the manual) and overclocking results as I would much rather talk about the media hype, enthusiast rumors vs what was actually delivered.

As a premier PC hardware enthusiast I get excited when new hardware launches as it brings with it the promise of better performance and headroom to support new technologies. However, there is a dark side, a curse that plagues many adopters that often pay too much for shiny new parts only to find they are actually worse than when you have.

A good example of this is my fabled GeForce 3 incident of 2001 where I was duped into trading my $600 USD Hercules GeForce 2 Ultra for a shiny new Hercules GeForce 3. Ever since I have been a little gun shy when it comes to new video cards and the RTX 20-Series is a prime example.

GeForce 3 was the first DirectX 8.0 compliant video card and at the time was the features most heavily marketed. Thing was game developers were slow to adopt the new technology making the DX8 benefit a little moot in the big picture. Likewise the GeForce 3 had the same theoretical pixel and texel throughput as the GeForce 2 and was clocked 20% slower than the GeForce 2 Ultra that I traded in. Bottom line the new GF3 was a GF2 with a few tweaks which made me a sad panda.

Let’s now look at the new RTX 2080 and RTX 2080 Ti. If you search the googlesphere you’ll find a number of reviews for the new GPU. Sadly NVIDIA passed over Hardware Asylum for the RTX launch so, I’m going to send you over to HotHardware to look at their 3DMark Time Spy charts. There you’ll find a 1000 point (12%) difference between the GTX 1080 Ti and RTX 2080. That gap increases to 4000 points (30%) when you consider the RTX 2080 Ti and hot-clocked versions of the RTX 2080 hover around a 1500 point (14%) gain which is something you can get by simply overclocking a GTX 1080 Ti.

Now, I know what you are thinking. “More performance is better give me 2x RTX 2080 Ti’s”

NVIDIA response will be “Glady, that will be $2400 USD, will that be cash or charge?”

Ouch!. Sadly, the RTX 2080 isn’t much better and will put you back $800 USD making a used GTX 1080 Ti look pretty tasty, assuming you can get one.

I have mentioned before that the new pricing structure seems like a corporate response to what happened during the mining crisis. In case you were not aware during that time demand shot through the roof which prompted uncontrolled price gouging due to poor supplies. Thing is consumers kept buying them which set the precedent that the public is willing to spend that kind of money on a quality product. Of course when companies pack more technology into a platform the price will naturally rise. However, $1200 USD for a RTX 2080 Ti is “Titan” card territory and not a place many consumers dabbled in.

In getting back to the subject of RTX performance there was another group of people interested to see what NVIDIA would deliver, the mighty investor. This group stands to make or lose money based on market performance and if the company delivers a dud then they are going to jump ship.

On September 21st I was checking the market summary for NVDA (the NVIDA stock symbol) and noticed a lot of red at the closing bell. Right under the chart was an article from CNBC titled “Nvidia shares fall after Morgan Stanley says the performance of its new gaming card is disappointing”. It is amazing what a single article can do and in reality investors don’t care about Ray Tracing or Tensor cores. They care about what sells and in this instance they believe the consumer will be driven by gaming performance. It is an easy metric to judge against and if the new card cannot deliver that doesn’t vote well for prospective sales.

As I mentioned before I’m a hardware enthusiast. I like hardware and get excited to see when new stuff comes to market. When it comes to the GeForce RTX 20-Series I am glad to see NVIDIA including more gaming technology into their GPUs and feel it would be amazing for wide screen gamers and the early adopters of 4K. Also, unlike with the GeForce 3 (back in the day) there are games ready to take advantage of Ray Tracing and DLSS should just work out of the box.

However, here is the thing.

If you dissect the GPU we have three basic systems, CUDA, Tensor and RT (Ray Tracing). CUDA is what current games rely on for video processing. The RTX 2080 Ti features 4352 cores across 64 SM units, the RTX 2080 features 2944 cores across 64 SM units and the GTX 1080 Ti comes with 3584 cores across 128 SM units. Turning based CUDA cores are faster than Pascal so that is how RTX 2080 can be faster than GTX 1080 Ti. (Indecently the core count drop also makes the RTX 20-Series less attractive to GPU miners)

Tensor and RT cores are basically tacked on as they occupy a separate region of the GPU. This leads me to believe that you could simply dedicate an unused GTX 1060 to handle Ray Tracing and DLSS similar to what we did with PhysX back in the day. In doing so you could enable everything that makes RTX “special” and feature it across all current GPU platforms which begs the question of “Why did NVIDIA bother in the first place?”

Overall it really gives you something to consider when looking at new video cards. Do the RTX features really warrant an upgrade? And, if so do you spend $1200 USD on the RTX 2080 TI? Or do you spend $800 USD on a RTX 2080 and get GTX 1080 Ti performance?? I’m not sure there is a clear answer. The writing on the wall tells me the same thing the investors are hearing. RTX is pretty darn cool but not cool enough to really warrant any serious thought.