Overclocking Competitions: About the Player not the Hardware

Author: Dennis GarciaAppling this to Live Overclocking

In a live challenge based overclocking competition the idea is to remove “bin” from the equation and allow the skill of the overclocker to take over. The overclocker will need to know the hardware they are using and how it applies to the benchmarks they will be running. The overclocker will also need to prepare for the competition by properly insulating their hardware and knowing how to maintain their overclocks because any disruption will mean almost certain disaster.

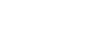

The idea is simple and can be executed in a variety of ways. In one example overclockers will be given a set of timed stages. Each stage will have a series of score based challenges and will be awarded points based on how they reached their goal and how accurate their results were. This process is then repeated until all of the challenges are complete.

Time is the only constant and will play a huge factor in this style of competition so if an overclocker misses their benchmark target or crashes during their benchmark run they may be required to scramble for a score or accept the penalty and move on to the next challenge.

- 5.8Ghz SuperPi 32 reach 5min 30sec (+/- 1 second)

- 3DMark 11 Performance reach 22500 marks (+/- 200marks)

Other variations might be an ascension style such as hitting times in SuperPi 32m.

- 6min 0sec (+/- 5 seconds)

- 5min 47sec (+/- 5 seconds)

- 5min 27sec (+/- 4 seconds

- 5min 10sec (+/- 3 seconds)

- 4min 55sec (+/- 2 seconds)

If you figure that each run takes about 10minutes to setup and run the overclocker should be able to finish this stage in an hour. However, reaching those times is harder than it sounds.

In the chart above points would be awarded based on how close they get to the target score and modified for being outside the variance. For instance submitting a score for the first ladder at exactly 6minutes would get full points while a score of 6min 10sec might only get a fraction or no points depending on how specific the judges wanted to be. The Run 6 listed in the chart is blank because they either ran out of time or submitted a score that was outside of tolerance.

We can apply the same ascension style overclocking to a 3D benchmark where the overclocker could decide if they wanted to use a single high-end graphics card or opt for an alternative SLI or Crossfire setup using a lower-end video cards. The alternative approach may allow them to reach the targets easier but at the cost of a few points.

Allowing for multiple paths to complete these challenges also opens the competition up to switching out major hardware components between stages or even between challenges. For instance using a Z97 Core i7 4770K for SuperPi and switching over to X99 Core i7 5960X for 3D.

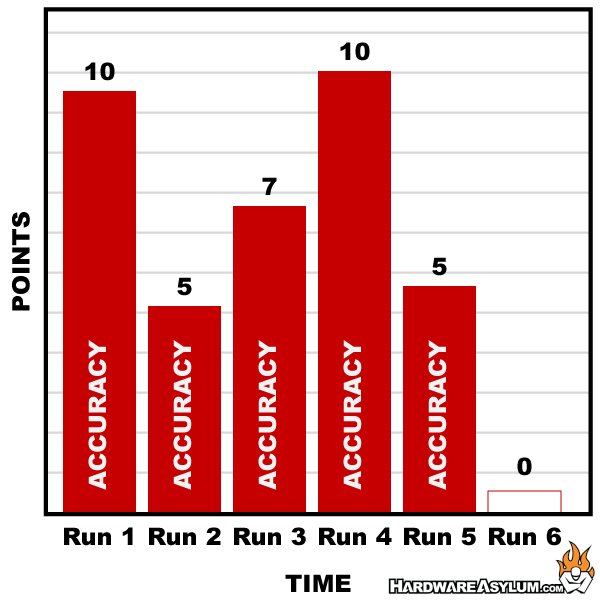

In a sample live competition you may get a scorecard like what is shown above. The actual scores and variances have been excluded to keep things simple. The event is comprised of three benchmarks. SuperPi 32M, Heaven DX11 and 3DMark 06 and based on how many scores you have to submit will determine the “weight” of each section. SuperPi will require 6 scores to get full points, Heaven 4 and 3DMark 5.

For an added challenge the time for each benchmark is set such that most competitors cannot reach the final run in each stage. So, in this example the SuperPi run is “typical” while getting 4 scores in Heaven is excellent. The competitor missed two runs in 3DMark and didn’t score very well however getting near perfect in the middle stage got them a total of 91 points.

As you can imagine the variance in points can sway greatly giving the competitor a real strategy. They can take their time and shoot for the most points and maybe miss the final run or they can blindly submit scores in hopes of getting all of their runs in. Both options can pay off or can be a disaster and if you factor in the ascension model listed above the tolerances get tighter with each run adding to the challenge and skill.