Balancing Model Quality and Hardware Demands in AI Workstations

Author: Dennis GarciaAI Hardware vs Memory

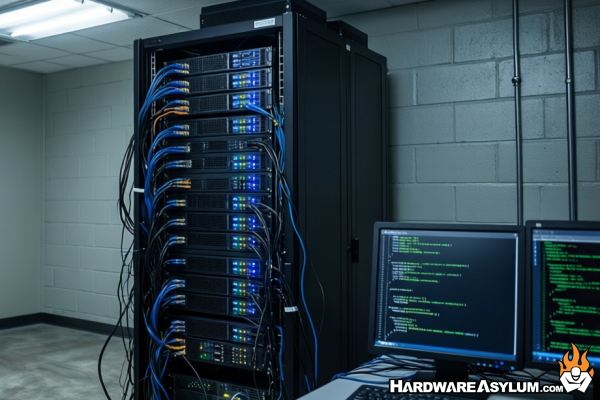

The first question you need to ask is “Why bother building a dedicated AI Workstation?”. There are plenty of articles talking about running AI models on a Raspberry Pi, running DeepSeek R1 on a Mac Mini so, if those systems are good enough, then why build something different? To better understand the difference lets contrast the hardware requirements for running Google Gemini where the model requires an entire watercooled datacenter with onsite nuclear reactor.

One of these implementations is useful whereas the other is so slow that the token count isn’t published.

At the lowest level, what we are talking about is Memory capacity and Scale. For Google Gemini it needs to handle millions of concurrent users and the Gemini-3 LLM is massive with an estimated 21,539B (Billion) parameters. These parameters span across language, graphics, programming and historical facts weaving the relationships together to provide a basis for the knowledge and understanding needed to function. In terms of scale it takes a massive amount of power to process that data, one token at a time across millions of active users.

Open Source and hobby models are considerably smaller and can average between 200M (Million) parameters to 600B or more. While these modes can be used at scale, they are mostly intended a single or small handful of users.

For the purposes of this article I am going to focus on the Open Source and Hobby models that could be run on consumer and workstation class hardware. To start let’s discuss AI Model architecture and memory requirements.

There are four components required to run an LLM/SLM (Large Language Model / Small Language Model). I may refer to these as AI Models in the future since the real distinction is often parameter size and/or intended function of the model.