TurboQuant - the Pied Piper Technology for AI

I'm about a week behind on my learning of the new Google compression called TurboQuant. TurboQuant is a two-part compression algorithm that refactors the KV Cache to not only make it smaller but faster and more accurate.

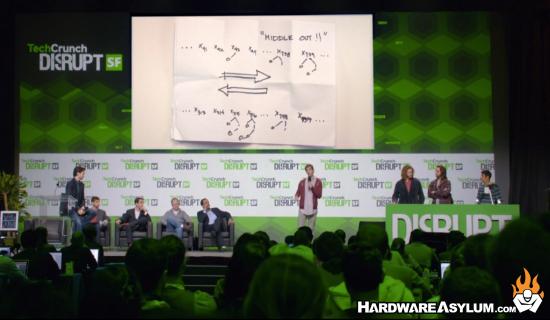

For those that remember the show "Silicon Valley" you will understand the Pied Piper reference and I find it ironic that TechCrunch is making the parallel.

The joke is a reference to the fictional startup Pied Piper that was the focus of HBO’s “Silicon Valley” TV series that ran from 2014 to 2019.

The show followed the startup’s founders as they navigated the tech ecosystem, facing challenges like competition from larger companies, fundraising, technology and product issues, and even wowing the judges at a fictional version of TechCrunch Disrupt.Pied Piper’s breakthrough technology on the TV show was a compression algorithm that greatly reduced file sizes with near-lossless compression. Google Research’s new TurboQuant is also about extreme compression without quality loss, but applied to a core bottleneck in AI systems. Hence, the comparisons.

One of the biggest problems when it comes to compression is data loss. When you save a JPG the image compression will introduce artifacts that can impact image quality. Since the data is actually removed, it will be smaller but, not look as good. DVD movies have a similar compression problem as does AI model quantization.

When you quantize an AI model you reduce the vector precision so instead of a 16 or 32-bit number you get a 4-bit representation for the vector. By simply removing the extra bits you lower the memory requirements and will lose a fair amount of precision. Most of the time this doesn’t matter but, it can introduce hallucinations and is one of the biggest problems associated with using AI models.

TurboQuant uses a compression method called PolarQuant that will refactor the KV Cache by simplifying the geometry and then overlays a QJL algorithm for error checking. The QJL process reminds me of the parity check in certain storage RAID levels and might even compensate for when certain information is ejected from the KV Cache.

It will be interesting to see how TurboQuant is adapted and if it will actually have any impact on the 2026 memory shortage aside from the apparent manipulation of memory stocks.

Related Web URL: https://techcrunch.com/2026/03/25/google-turboquan...