Fine-Tuning LLMs on a Local Multi-GPU AI Workstation

Author: Dennis GarciaPhison aiDAPTIV

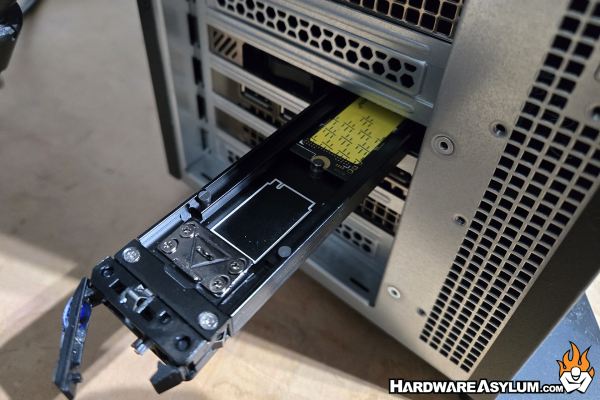

The final software package I am currently using is actually a pseudo commercial product from Phison called aiDAPTIV. The best way I can describe it is a software supported hardware platform build around a special high endurance SSD. Most enterprise SSDs are designed for extended write cycles and allows them to handle the data center environment. The aiDAPTIV SSD takes things further by delivering a 100 DWPD (drive write per day) endurance which can replace the DRAM offload layer used in Deepspeed.

What this translates to is the ability to train LLMs on workstation hardware that would otherwise be impossible. For instance with Deepspeed, you can offload to parts of the LLM to either DRAM or NVMe. When using DRAM only, it works great but when switching to NVMe there is a bug in the software that will still stream the data to DRAM and eventually crash the system.

The Phison aiDAPTIV software is very similar but will only steam the model weights to the NVMe drive with a slight overhead in DRAM for staging. The software will then handle swapping weights and tensor information in an out of the GPU as needed and, in batches that you can configure. For the end user this removes the memory barrier for LLM training and replaces it with a dependency on the available storage capacity of the aiDAPTIV SSD. Simply put, you can train just about an LLM provided you have enough storage space.

As with most software there are some caveats. Phison is rather slow when it comes to including model support in their software and there is a chance that your favorite model may not ever be supported. Currently, training seems to be limited to Language models only, Image and Multi Modal models do not appear to be supported. There is also a dataset limitation with only a small number of formats being understood for processing. Maybe one of the biggest issues (or benefits depending on your outlook) the software only works with the aiDAPTIV SSD. This shouldn’t be an issue but, the drive is not cheap or, widely available. What more, if Phison decides to pull the drive, the software becomes worthless.

On a positive, the sample software on their Github repository implies that you could write in support for additional datasets and expand it to support image models and your favorite LLM architecture. Given that the software is, basically, and undocumented Python library, there are options available provided you can work out the memory swapping.

I have both the Python and Docker version of aiDAPTIV installed. When working with the Python code the environment matters since it appears they only support a narrow platform though if you are just interested in model training, the Docker install works pretty well.