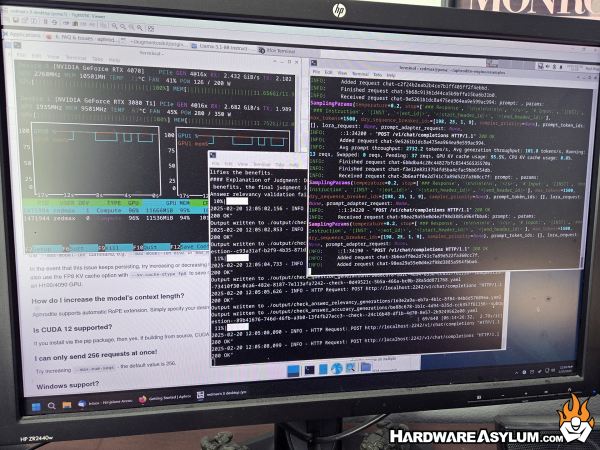

Fine-Tuning LLMs on a Local Multi-GPU AI Workstation

Author: Dennis GarciaConclusion

I had intended these last two article to be a high level overview of the software I am currently using on my AI workstation and draw attention to some of the other projects out there. These range from a ChatGPT style web interface to node based image generation, the possibilities are endless and growing every day.

Over the past couple years I have been learning a ton about AI, LLMs and how enthusiast hardware factors in to make everything work. What I discovered is that even after forty years nobody has really figured out what to use AI for. For instance, I was watching some old episodes of the Computer Chronicles where they discussed AI in 1984 and 1985. Back then AI could be equated to a decision tree despite the model actually being constructed as a vector data store. Not much has changed in terms of architecture but advances in computer hardware and processing have allowed us to fill the gaps and expand on the basic concept.

Instead of needing to build a decision tree interface to get an answer, we can now just type a question and expect the model to understand and generate a response. It’s not so much that AI has advanced but rather the hardware has finally enabled the software to be useful. Now that researches and developers have the tools there has been a land rush to figure out what can be done while ignoring everything else because if we don’t build it someone else will.

That is the reason why OpenClaw exploded in just a matter of months. Someone figured out how to make AI useful using existing tools and simply repackaged them in a different way. It just happens to be the flavor of the month and at somepoint there will be another development.

I fully expect that this AI boom will reach a crest at some point and we have already seen it happen. The largest indicator is the incremental advances in model quality as they still do the basic thing and are just larger now. The massive growth in cloud models is just an attempt to include more parameters and more knowledge to make them more powerful. Local and Hobby models have started appearing as MoE (Mixture of Experts) where they combine multiple smaller models together in an attempt to increase the knowledge base without the hardware demands.

For the longest time CPUs and GPUs were growing at a steady rate and eventually they stopped. The growth we see in the processor space is not from the chips getting any faster but from there being more cores available. In a way older CPUs were actually faster than current versions when you compare single core performance but, will lose every time due modern versions being able to run parallel processes. I believe we are at a similar stage in AI growth where what can be done has already happened and it is now just a matter of scale and balancing that with the cost of hardware.

Of course where this will really play out is in the datacenter space were the major limitation is available power, not only to run the servers but to run the cooling systems. The only way around this is to build more datacenters and eventually the resources will run out.

Thank you for checking out my AI series, if you have any questions or comments, you know how to contact me