Building a Multi-GPU AI Workstation on a Budget

Author: Dennis GarciaHardware Selection - Mobo and GPU

Running AI tasks and applications is heavily dependent on available memory and the Hardware Asylum Labs workstation takes a min/max approach to the hardware selection.

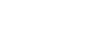

Starting with the motherboard and processor combo. The TRX40 socket was the last Threadripper platform to support standard DDR4 DRAM making it an excellent HEDT platform in the consumer space. While the 4.5Ghz Threadripper 3960X and 256GB of supported DRAM are nice the real reason for using a Threadripper over a standard Ryzen or Intel Core CPU is the total number of PCI Express Lanes. My Threadripper 3960X has 72 usable Gen4 lanes while a Ryzen 9 9950X only has 24.

When SLI and CrossFire were relevant I would include a nice chart showing the PCI Express layout and provide my GPU Index for any particular motherboard. This factored in total bandwidth available to each PCI Express slot, distance between the slots and how many total GPUs could be supported. While SLI and CrossFire are no longer relevant, it would seem in the age of AI hardware the GPU Index could become useful again.

For instance, most every X870E motherboard supporting the Ryzen 9 9950X has two 16x expansion slots onboard. One for primary graphics and a secondary slot for accessories. If you populate this secondary slot, the motherboard will divide the 16x lanes of PCI Express between the two making it an 8x/8x configuration. That lowers the effective bandwidth and despite the PCI Express Gen5 support it can impact performance and latency.

On my ROG Strix TRX40-E Gaming motherboard I have a total of three 16x PCI Express slots and all three will run at full bandwidth regardless of what I plug in.

As a hardware reviewer I often have excess hardware laying around I decided to put the TRX40 to work. But I also had other reasons which we will dive into a little later.

Here might be the single most debated component of my build. I am running 2x RTX A4500 GPUs. These are Ampere generation (30-Series) and equivalent to the RTX 3080. The major difference is that the A4500 comes with 20GB of onboard VRAM faster memory clocks and a blower style cooler.

At one point I tried a number of different GPUs. First were 2x RTX A4000 cards, these are single slot GPUs with only 16GB of VRAM each. I found the coolers too restrictive for workstation usage and were eventually moved to the datacenter server. That machine is an HP DL360 1U and with some special upgrades allowing me to run dual GPUs without issue.

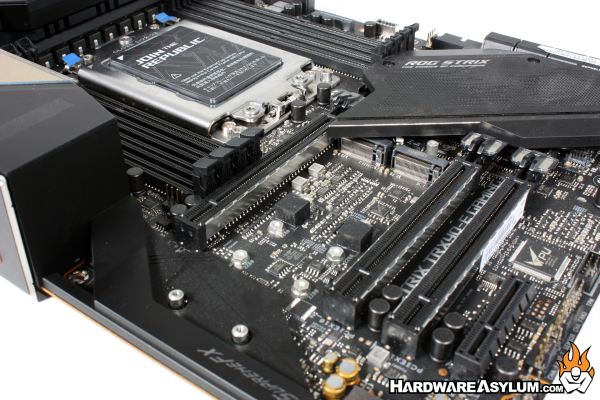

Next, I tried 2x RTX 3090 GPUs. These are actually what most AI Hobbyists pick due to the 24GB of onboard memory, higher clock speeds and rather low cost. Unfortunately, the founder’s edition cooler is a passthrough design and intended to be used in single card configurations. Once you stack two, the heat from the lower GPU flows into the second causing it to overheat and throttle. That will trigger the first card to also throttle just to stay in sync.

Not wanting to give up I also considered most consumer triple fan solutions but, wanted a compact and quiet AI Workstation, not a fan loaded flow bench mirroring what our Crypto friends were building. Also, the quad slot coolers wouldn’t fit on this motherboard.

At the time of this article the RTX A4500 was a happy medium at a little over $1000 USD each on eBay these cards supported the optimization technologies I wanted to use, where dual slot and in a blower configuration. A5000 cards were more than double that cost and anything in the Ada generation was more than triple. It would seem that even in the second-hand market these professional workstation GPUs still have extremely proud owners.

So, yes, there faster cards however, they either have less onboard VRAM or cost twice as much. For this I decided to trade performance for time and really, it’s not all that bad.